A question of ethics: illegal discoveries during a penetration test

Sometimes evidence of crime can be found during penetration tests, so what do you do? I'll discuss the various dilemmas professionals face following some Twitter research.

During a penetration test it's possible you'll come across evidence of illegal activity, so do you report that to law enforcement or just gloss over it? I turned to the cyber security community on Twitter with a poll to find out other people's views, and those form a large amount of this post. I'd like to thank everyone that participated and I hope this post gives a fair summary of what was a fascinating discussion.

Framing the question

My question to Twitter was "if you find something illegal during a pen test, would you report to the authorities?". Very early on I received responses highlighting that my question was ambiguous (for example due to legal differences in varying jurisdictions) and mentioning crimes that may exist in some countries but that I hadn't thought of given my British English context. Lesley Carhart (@hacks4pancakes) put it like this:

Boy.... define "illegal". It can vary vastly by country. Are you asking if I'd get a gay employee in a country that criminalizes it arrested?

— Lesley Carhart (@hacks4pancakes) May 22, 2019

Homosexuality isn't a crime in Britain (anymore, it used to be) so that hadn't crossed my mind. My main thinking was more towards what some would consider extremes (gross financial misconduct, child abuse, murder, etc.) so this lead to some really interesting discussions. I've certainly noted the need to be more specific with my poll questions in future, although the ambiguity did lead to some really fascinating comments, as I'll come on to later.

Important point to note:

We tend to work in global enterprise environments, and what is illegal in one state or country can be very different from another one.

(Lesley Carhart (@hacks4pancakes))

I also spoke to a pen testing friend who indicated that it would depend a) what was found and b) if the tester had done illegal things themselves in the past. The suggestion being that those who had broken the law historically may be more sympathetic to those they find breaking the law. There's also the possibility of being investigated yourself if you report something.

Most of the data for this blog post comes from my Twitter poll, so, on to the results.

Results

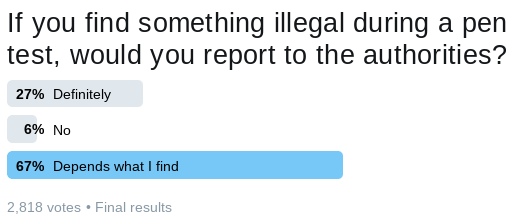

It's fair to say I've never had a tweet get so much exposure (thanks everyone that responded / retweeted) - 2,818 votes over 7 days with most of the votes having arrived by day three. Apparently the tweet itself had "80,964 impressions".

As just a graph these results aren't very helpful. 27% of respondents would definitely report the illegal activity, 6% would not and, importantly from a discussion point of view, 67% might report it depending on what they find. "Depends" was in the lead right from the outset which I think probably matches what we all do in real world life. For example, I see many cyclists ride through red lights and many drivers on their mobile phones while driving (both illegal in my context). Do I call the police for each of these? No! The police haven't the resources to deal with each of these indiscretions.

Finding a group setting off fireworks while sat in a car park, between two cars? I absolutely called 999 on that one (911 for American readers) as there was threat to life.

Crime rankings

Comments attached to the poll showed there was a ranking of crimes in people's minds, and this affected their responses. A lot of comments about the type of crime explained the "depends" voting. It was also commented that people would respond differently if what they discovered was also morally wrong, rather than just illegal. To give an example from a while back, outside the realms of cyber security: companies such as Starbucks made the news because they paid very little UK tax. When you actually dig into the UK tax law it's clear that Starbucks et al aren't doing anything illegal, but their behaviour was considered morally wrong by many.

So, going back to ICT and the digital realm, there was suggestion that reporting a licencing violation (where a company is illegally using copies of software) may only be reported if it was persistent across the organisation. Finding a single, hooky, copy of an application may merely result in the violation being noted in the report:

Depends. Organized crime? Yes. Software license violations? Depending on how severe, it would be raised with the client first, and definitely go in the report, but I wouldn't call the cops. I'd use a healthy dose of common sense.

— D K (@kln_nurv) May 22, 2019

Not surprisingly, well, given my context and the fact I'm a volunteer youth worker, crimes involving child abuse were pretty much unanimously in the "will report" box. Some advised they'd immediately call the FBI, others mentioned "there is no street code for child abusers".

Reporting to client managers

Rather than reporting straight to the police (or similar) it was interesting to see that some suggested reporting problems to managers at the client first, to allow them to sort out the problem. Software piracy came up again here, with some stating this issue should just be in your penetration test report while others gave me the impression they'd report it to management sooner.

Another important comment was that the client employee level may matter as the test may have been arranged to root out internal issues (thanks @FerfeLaBat).

Ethics / NDA / contracts

Before doing a penetration test there's a contract signed between the tester (or testing company) and the client. This contract covers the rules of engagement (what will be tested and how) but, importantly, contracts vary so it's important to be familiar with the terms you're working under.

Non-disclosure is often part of the contract too, otherwise there'd be no legal recourse if a tester revealed findings to others. It's possible there'd also be a separate non-disclosure agreement (NDA). Local law comes in to play again here, as some territories would deem an NDA unenforceable if the NDA attempted to prevent the reporting of a crime. Other laws may make a person complicit to the crime if they know about a crime but don't report it.

One of the most interesting points related to the ethics of breaking your contractual obligations:

Honestly if you don’t respect contractual obligations, your ethics mean nothing. You report findings to the covered entity to whom you are contracted. Period.

— MK Hamilton (@seattlemkh) May 22, 2019

Contract content seems to be the solution here too. A number of people suggested the contract should clearly set out a tester's / test company's intentions in the event of finding something illegal. By including that in the contract it's possible to respect contractual obligations, the law and your ethics.

Caveat: ethics, law and morals vary by person and jurisdiction. If in doubt, consult a lawyer.

Assigning guilt

Given the world we live in, with hacks happening on a daily basis, it's important to note that assigning guilt isn't trivial (I mentioned this in a previous post about investigating users). It's possible the illegal activity that you've discovered during the penetration test was actually placed on your client's system following an illegal hack conducted by a third party.

Much like a forensic analyst, the penetration tester cannot apportion blame - that task lies with law enforcement, lawyers and judges. Not having the full facts seems to be another reason some people voted "depends".

Including in the test report

In almost every case I'd be including the discovery of illegal activity in my report, and others commented similarly. If I was going to omit something I'd have to take legal advice first - for example, if including the finding in my report was likely to cause harm to an individual.

To be fair to the client my tone would have to be factual ("it was found that key generators for proprietary software existed on the following devices ...") rather than accusatory ("your company clearly breaks the law regularly"). That said, the entire report should be written to present facts, rather than applying spin or bias, so that should be par for the course.

Conclusions

Responses were truly fascinating and I'm glad I took the time to gather some other viewpoints before I wrote this post. I tend to be quite a "black and white" person so ambiguous cases like this aren't easy for me.

Pretty much across the board the responses that commented on types of crime said that human rights violations, child abuse or endangerment of life would result in reporting to the authorities. I can agree with that stance as I feel we all have a moral obligation to stand up for those unable to stand up for themselves.

At the end of the day, I'd want to seek legal advice and think about the details very carefully.

Finally, this was a very sobering point:

In virtually any state on earth, it is nearly impossible to live without breaking *some* law, violating *some* statute.

— Adam Thompson (@athompso99) May 23, 2019

Network contents reflect lives of users, to greater or lesser extent.

So... Yeah, it depends. The server in Canada is breaking Nigerian laws? *shrug*

Further reading

I was directed to the Non-Compliance with Laws and Regulations (NOCLAR) standard which is aimed at accountants (thanks @budzeg). The auditor finding a client in a non-compliant state is advised to contact the client's management team first before going to the authorities if necessary. I'm not aware of a similar framework within ICT at the moment (please do tweet me if you're aware of one).

If you're interested in reading all of the responses, the full thread is here.

Banner image a word cloud based on some words that sprang to mind.